14. Heston Hedging under Stochastic Local Volatility#

Uses the cppfm library implementing van der Stoep et al. [2014] for LSV calibration.

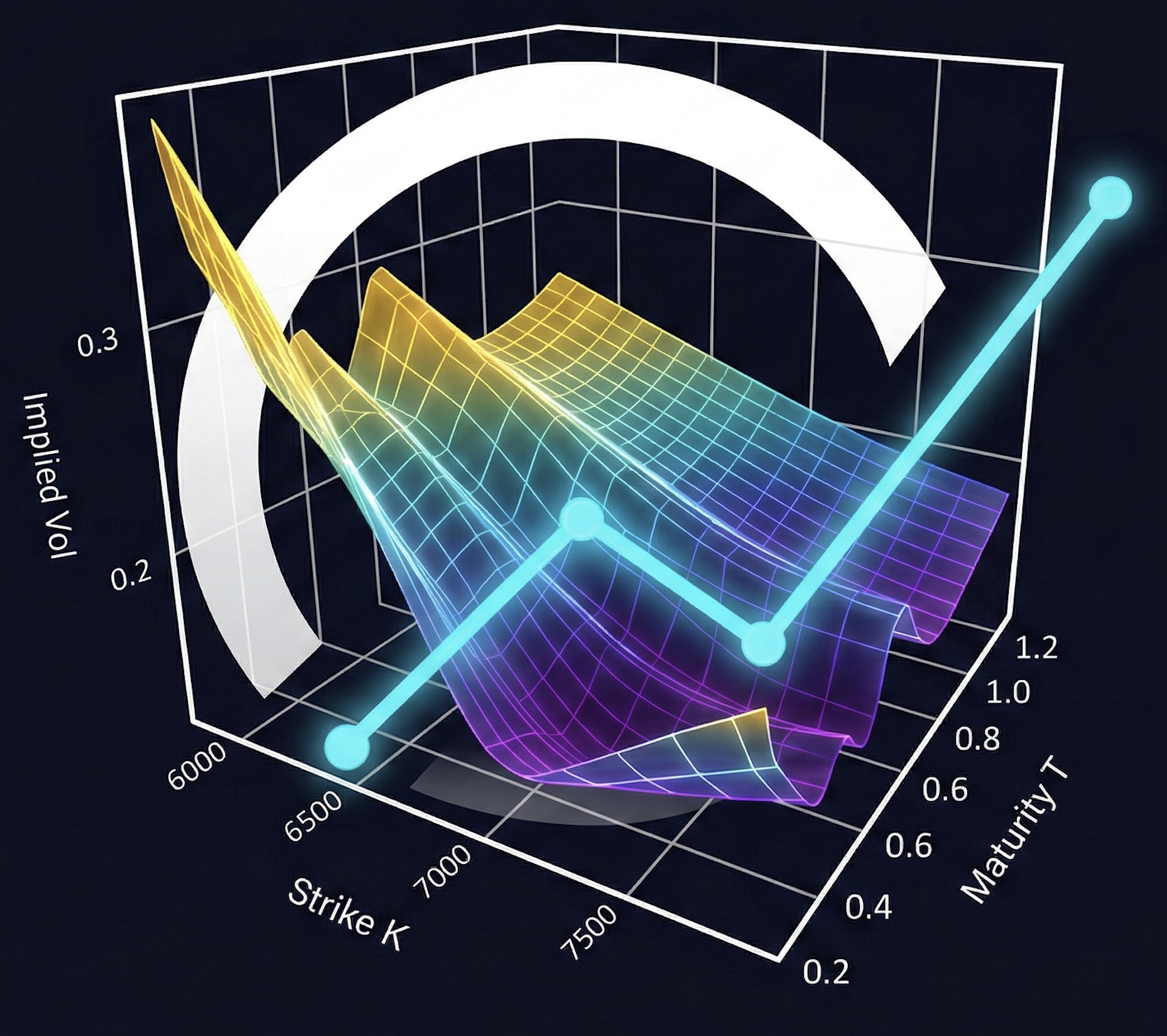

LSV Dynamics:

The leverage function \(L(S,t)\) ensures exact calibration to vanillas:

Model |

Vanilla Fit |

Forward Smile |

Barrier Pricing |

|---|---|---|---|

Local Vol |

Exact |

Flattens |

Biased |

Heston |

Approximate |

Realistic |

Biased |

LSV |

Exact |

Realistic |

Accurate |

14.1. 12.1 Market Data and Model Setup#

Surface bounds: ((80.0, 120.0), (0.08, 2.0))

ATM 1Y IV: 18.00%

Local vol at (S=100, t=0.5): 11.76%

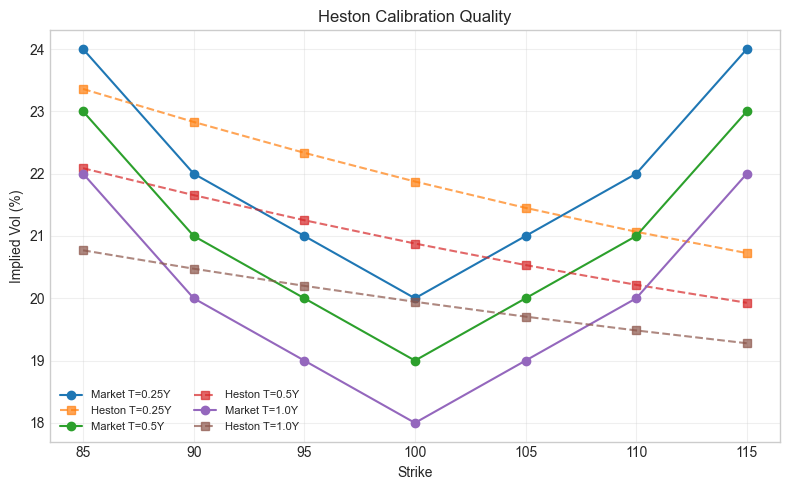

14.2. Heston Calibration#

Calibrate Heston parameters to match the market vol surface before running LSV.

Calibrating Heston to iv (global search)...

<HestonModel S0=100.000000 v0=0.056019 kappa=4.000000 vbar=0.034339 sigma_v=0.200000 rho=-0.500000>

Feller condition: True

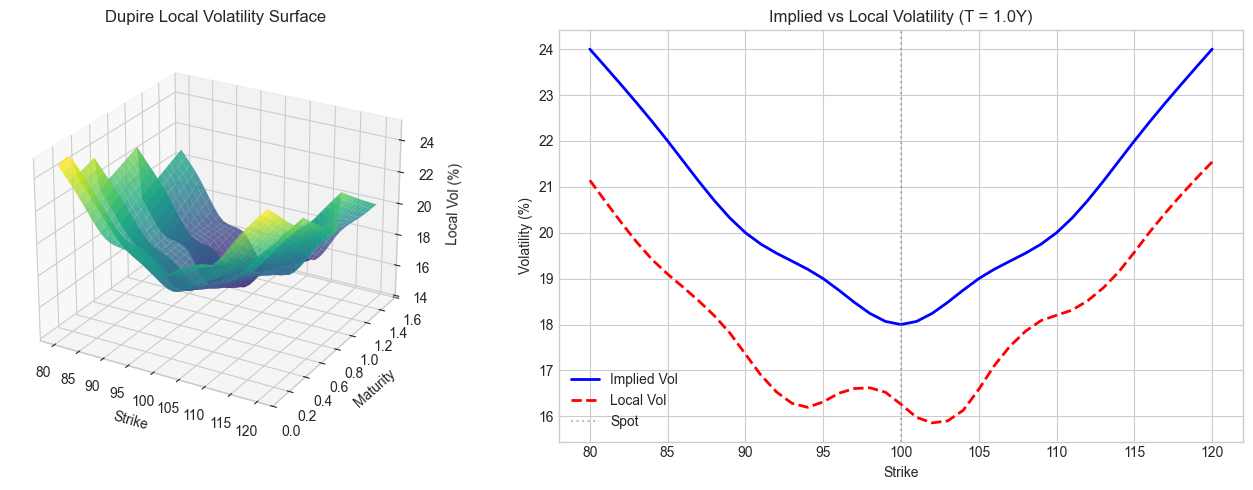

14.3. 12.2 Dupire Local Volatility Surface#

Dupire’s formula extracts local volatility from European option prices:

Local vol is typically higher than implied vol for OTM puts (skew effect).

ATM Local Vol (K=100, T=1): 16.26%

ATM Implied Vol (K=100, T=1): 18.00%

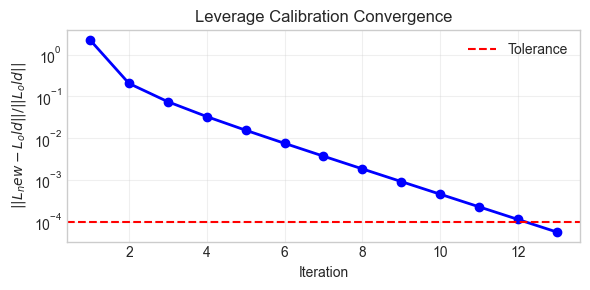

14.4. 12.3 LSV Monte Carlo Simulation#

Van der Stoep iteration:

Start with \(L^{(0)} = 1\) (pure Heston)

Simulate paths, bin by \((S,t)\) to get \(\mathbb{E}[v|S]\)

Update \(L^{(n)} = \sigma_{\text{Dupire}} / \sqrt{\mathbb{E}^{(n-1)}[v|S]}\)

Repeat until convergence

Calibrating leverage function...

Calibration converged in 13 iterations

Final error: 0.000058

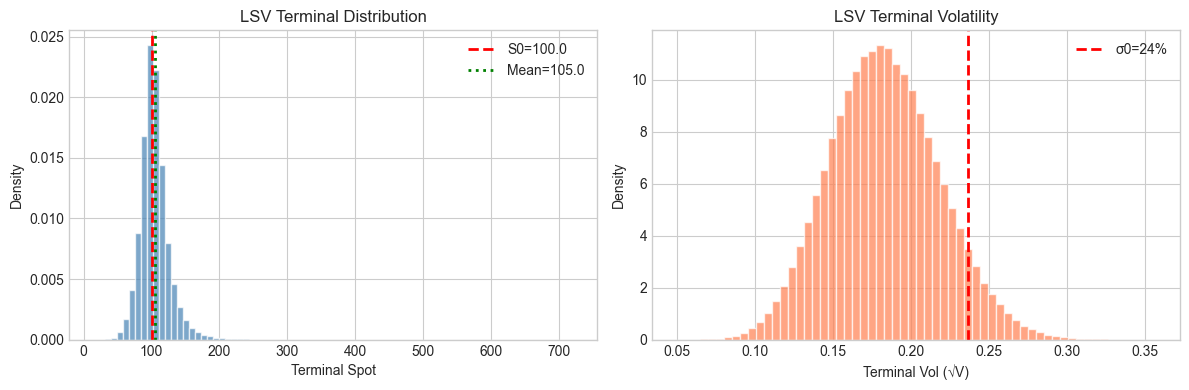

Simulating 500,000 paths with 100 steps...

Done in 8.05s

Terminal S: mean=105.01, std=22.67

Terminal V: mean=0.0347, std=0.0132

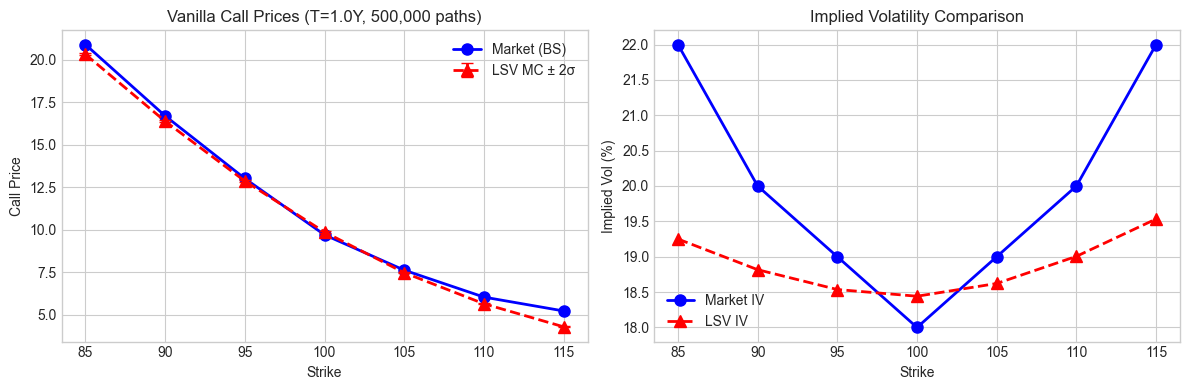

14.5. 12.4 Vanilla Repricing Validation#

LSV should reprice vanillas exactly (by construction). We compare MC prices to market.

IV Error (bps): mean=-111.4, std=106.3, max=275.0

Price stderr (avg): 0.0237

14.6. Diagnostic: Understanding the Repricing Error#

The LSV model should reprice vanillas exactly by construction. The diagnostics below reveal:

Issue Identified: The cppfm.VolatilitySurface.local_volatility() function is returning unstable/incorrect values.

Dupire local vol requires computing:

\(\frac{\partial C}{\partial T}\) (calendar spread sensitivity)

\(\frac{\partial^2 C}{\partial K^2}\) (butterfly sensitivity)

These derivatives are difficult to compute stably from a discretely-sampled implied vol surface. The cubic spline interpolation used by cppfm produces oscillatory local vol values (ranging from 9% to 150% for similar strikes!), which breaks the LSV calibration.

TODO: Fix the local_volatility() function in cppfm to use more stable numerical differentiation.

=== Diagnostic 1: Pure Heston MC vs Analytical ===

Strike | Heston MC | Heston Analytical | Diff

-------------------------------------------------------

85 | 20.6577 | 20.6289 | +0.0288

90 | 16.8593 | 16.8293 | +0.0300

95 | 13.4427 | 13.4129 | +0.0298

100 | 10.4559 | 10.4295 | +0.0263

105 | 7.9270 | 7.9046 | +0.0224

110 | 5.8530 | 5.8361 | +0.0169

115 | 4.2108 | 4.1967 | +0.0140

Mean absolute error: 0.0240

=== Diagnostic 2: Implied Leverage Analysis ===

Implied leverage at T=1.0:

Strike | E[v|S] | σ_Dupire | L=σ/√E[v]

--------------------------------------------------

31.9 | 0.0553 | 41.89% | 1.781

102.6 | 0.0346 | 15.86% | 0.853

173.3 | 0.0268 | 40.67% | 2.482

244.1 | 0.0253 | 70.58% | 4.438

314.8 | 0.0240 | 98.54% | 6.356

385.6 | 0.0250 | 126.26% | 7.985

Leverage stats: min=0.853, max=9.087, mean=4.326

(L≈1 means pure Heston; L>1 or L<1 indicates local vol adjustment)

=== Diagnostic 3: Local Vol vs Implied Vol ===

At T=1.0:

Strike | IV (%) | Local Vol (%) | Ratio LV/IV

-------------------------------------------------------

85 | 22.00 | 19.10 | 0.868

90 | 20.00 | 17.35 | 0.868

95 | 19.00 | 16.31 | 0.859

100 | 18.00 | 16.26 | 0.903

105 | 19.00 | 16.59 | 0.873

110 | 20.00 | 18.20 | 0.910

115 | 22.00 | 19.58 | 0.890

ATM IV: T=1Y: 18.00%, T=2Y: 17.00%

Term structure: Decreasing

=== Diagnostic 4: Simulation Analysis ===

Time steps: 101 steps, from 0.0 to 1.0

dt = 0.0100

Forward price (S0*exp(rT)): 105.13

MC mean terminal S: 105.01

Ratio: 0.9989

OK: Terminal mean close to forward

Expected E[V_T] under Heston: 0.0347

MC mean V_T: 0.0347

Ratio: 1.0000

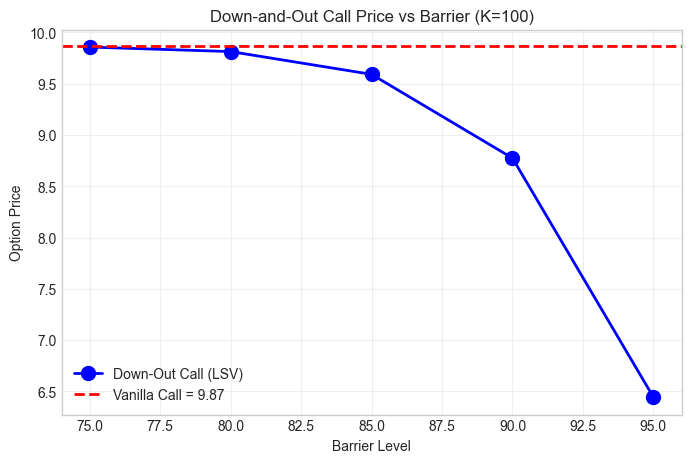

14.7. 12.5 Barrier Option Pricing#

For path-dependent options, model choice matters. We compare down-and-out calls under LSV.

Simulating full paths for barrier pricing...

Done in 15.36s

Path array shape: (500000, 101)

Vanilla call: 9.8670

DO Call (B=75): 9.8578 (99.9% of vanilla)

DO Call (B=80): 9.8141 (99.5% of vanilla)

DO Call (B=85): 9.5919 (97.2% of vanilla)

DO Call (B=90): 8.7751 (88.9% of vanilla)

DO Call (B=95): 6.4449 (65.3% of vanilla)

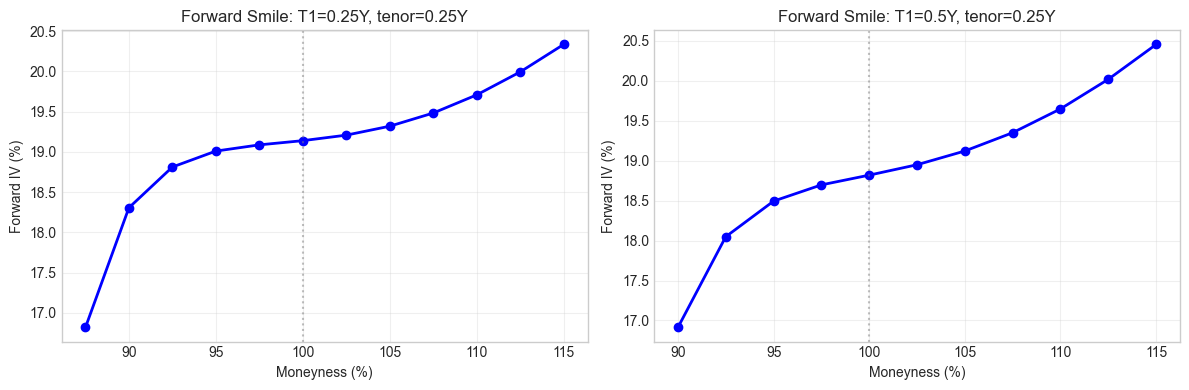

14.8. 12.6 Forward Smile Dynamics#

Local vol flattens the forward smile (wrong). LSV preserves it via the stochastic component.

ATM Forward Vol vs Spot Vol:

T1=0.25Y: Forward ATM = 19.14%, Spot ATM = 20.00%

T1=0.5Y: Forward ATM = 18.82%, Spot ATM = 20.00%

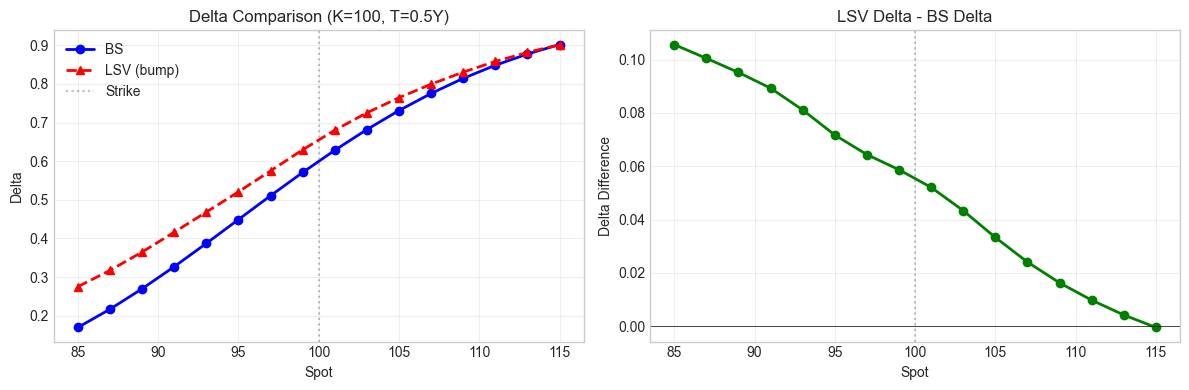

14.9. 12.7 Greeks Comparison#

Compare delta under different models. For LSV we use finite difference bumping.

ATM Delta: BS=0.6283, LSV=0.6804

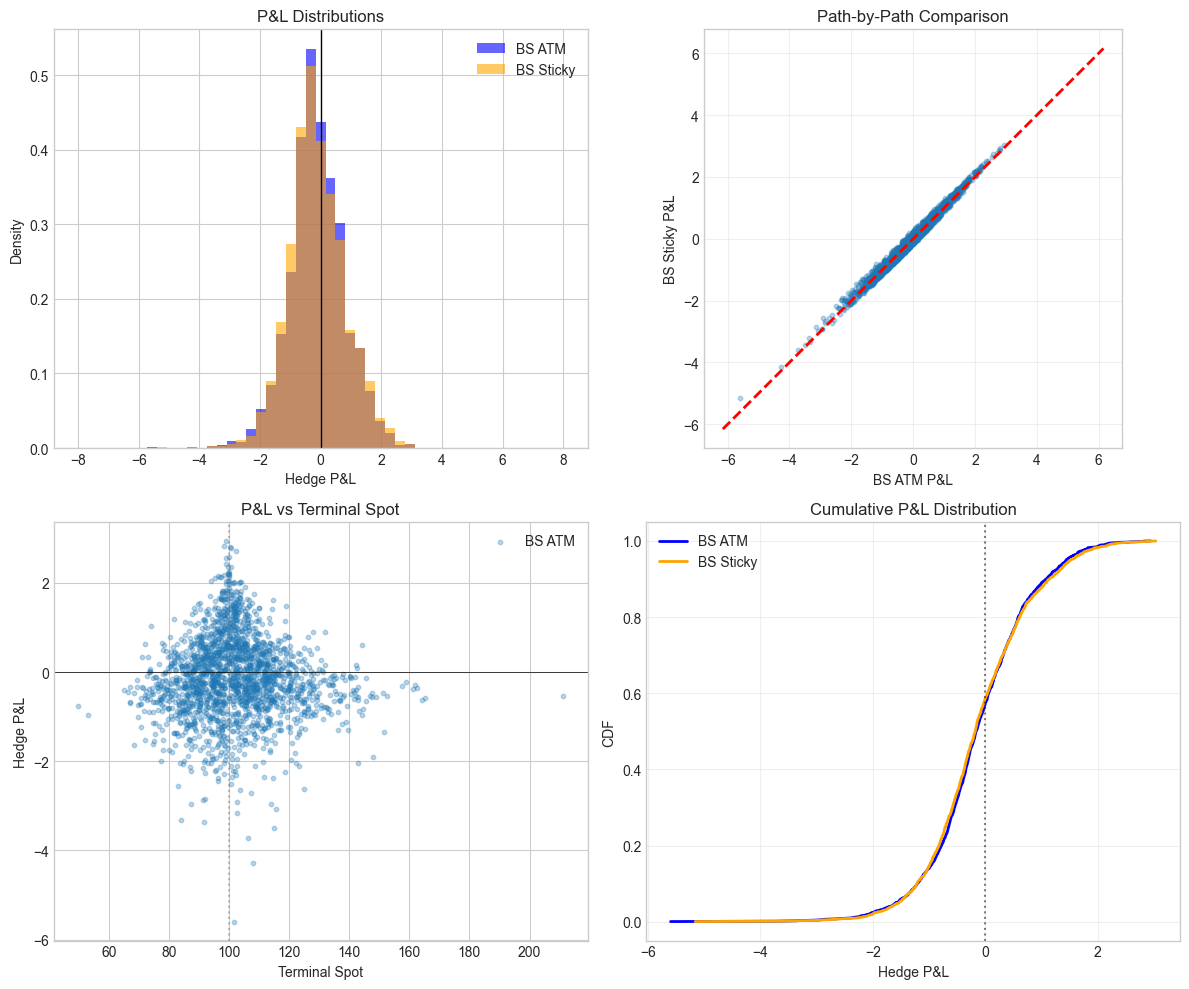

14.10. 12.8 Hedging P&L Simulation#

Delta-hedge a short call where reality follows LSV dynamics. Compare hedging with BS delta vs sticky-strike delta.

Running hedge simulation on 2000 paths (T=0.5Y)...

Hedge P&L Statistics:

Model Mean Std 5th% 95th%

-------------------------------------------------------

BS ATM -0.1136 0.9186 -1.5820 1.4378

BS Sticky -0.1145 0.9369 -1.5307 1.5175

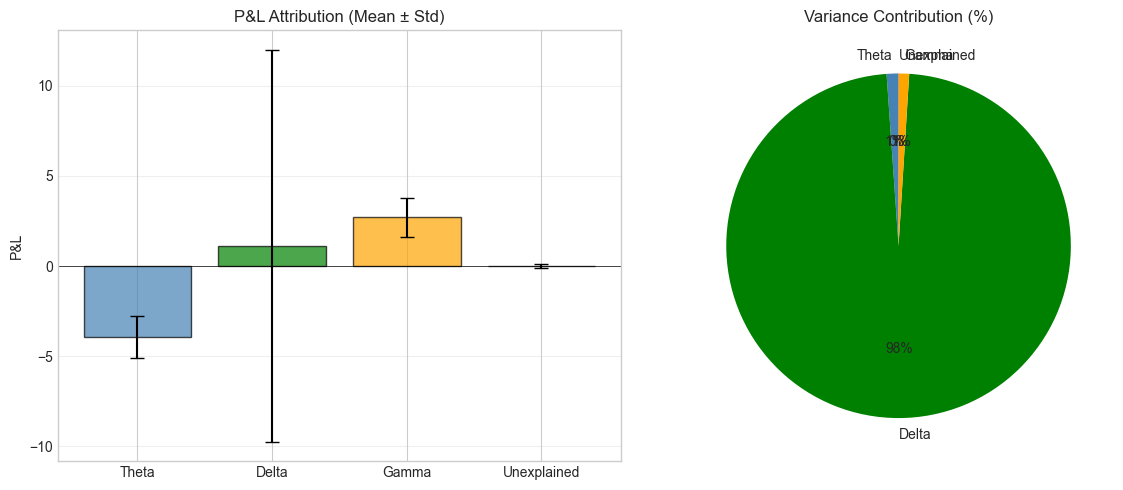

14.11. 12.9 P&L Attribution#

Decompose hedge P&L into Greeks components: theta, delta, gamma, unexplained.

P&L Attribution Summary:

Component Mean Std

----------------------------------------

Theta -3.9269 1.1559

Delta 1.1270 10.8600

Gamma 2.7123 1.0845

Unexplained -0.0008 0.1251

Total -0.0884 10.5387

Theta/Gamma Ratio: -1.45

(Should be ~-0.5 * σ² for ATM in BS world)

LSV gives exact vanilla calibration with realistic forward dynamics. Delta choice matters less than expected for vanilla PnL variance — the unhedgeable vol component dominates, same conclusion as under pure Heston.

References: