9. Pricing American Options with the COS Method#

This notebook implements the COS method for American options from Fang and Oosterlee [2009].

The challenge with American options is the early exercise boundary—at each time step, we need to decide whether to exercise or continue. The COS method handles this by working backwards in time: project option values onto Fourier coefficients, propagate one step back using the characteristic function, reconstruct on a grid, and take the max with the exercise value.

We build three versions with increasing efficiency:

Original: Dense grid with trapezoidal integration — slow but clear

Matrix: N-point grid with matrix multiply — \(O(N^2)\) per step

FFT: Symmetric extensions for cosine/sine transforms — \(O(N \log N)\) per step

The FFT version matches the complexity claimed in the paper, though the implementation details aren’t obvious (symmetric FFT extensions, interior scaling factors, etc.).

Reference (Binomial N=2000): $6.089990

COS (N=128, M=50): $6.127223 (error: 0.611%)

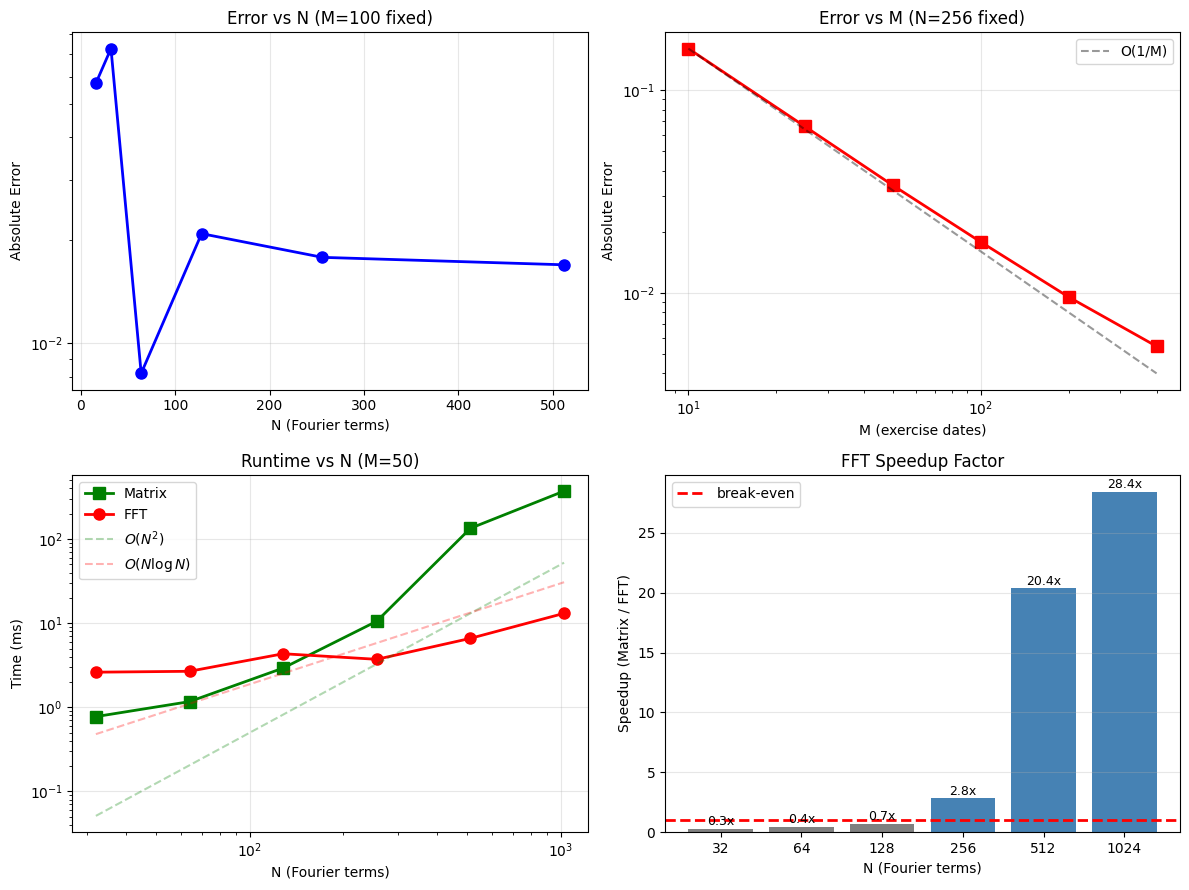

9.1. Convergence Analysis#

Two sources of error in the COS method:

Spectral (N): truncating the Fourier series

Temporal (M): discretizing the exercise opportunities

We analyze both, plus runtime scaling to verify the claimed complexity.

9.2. Getting to \(O(N \log N)\): The FFT Implementation#

The paper claims \(O(N \log N)\) per time step but doesn’t explain how. Here’s what’s involved.

9.2.1. The bottleneck#

Each backward step requires:

Reconstruction (coefficients → grid): compute continuation values

Projection (grid → coefficients): compute new Fourier coefficients

Both involve \(N\) terms at \(N\) points—naively \(O(N^2)\).

9.2.2. Splitting real and imaginary parts#

The reconstruction involves: $\(\text{Re}\{\varphi_k e^{i\theta_{kj}}\} = \text{Re}\{\varphi_k\}\cos(\theta_{kj}) - \text{Im}\{\varphi_k\}\sin(\theta_{kj})\)$

This gives two separate sums (cosine and sine) that we can compute independently.

9.2.3. Using FFT symmetry#

The trick is that cosine sums can be computed via FFT of an even-extended sequence, and sine sums via odd-extended sequences. With FFT length \(M = 2(N-1)\):

Even extension: \([a_0, a_1, \ldots, a_{N-1}, a_{N-2}, \ldots, a_1]\) → real FFT output gives cosine sum

Odd extension: \([0, b_1, \ldots, b_{N-2}, 0, -b_{N-2}, \ldots, -b_1]\) → imaginary FFT output gives sine sum

9.2.4. The interior scaling#

When I first implemented this, results were wrong by a factor of 2 on interior terms. The issue: even extension doubles the contribution of interior points (they appear in both halves). Fix: scale interior coefficients by 0.5 before extension.

a_scaled = a.copy()

a_scaled[1:-1] *= 0.5 # Interior terms only

This took a while to debug.

9.3. Conclusion#

The COS method works well for American options. The FFT version is worth using for \(N > 200\) or so—below that, the matrix version is simpler and fast enough.

All three implementations give the same prices (to machine precision), with ~0.5-1% error vs binomial at \(N=128\), \(M=50\). The binomial tree with \(N \geq 500\) gives 3-4 significant digits, which is enough to validate the COS results.